AI agents are no longer a futuristic concept-they are becoming integral to enterprise operations. LangChain's recent survey of over 1,300 professionals highlights how organizations are deploying agents, the barriers they face, and the engineering practices shaping this rapidly evolving field.

Adoption Trends: Large Enterprises Lead the Way

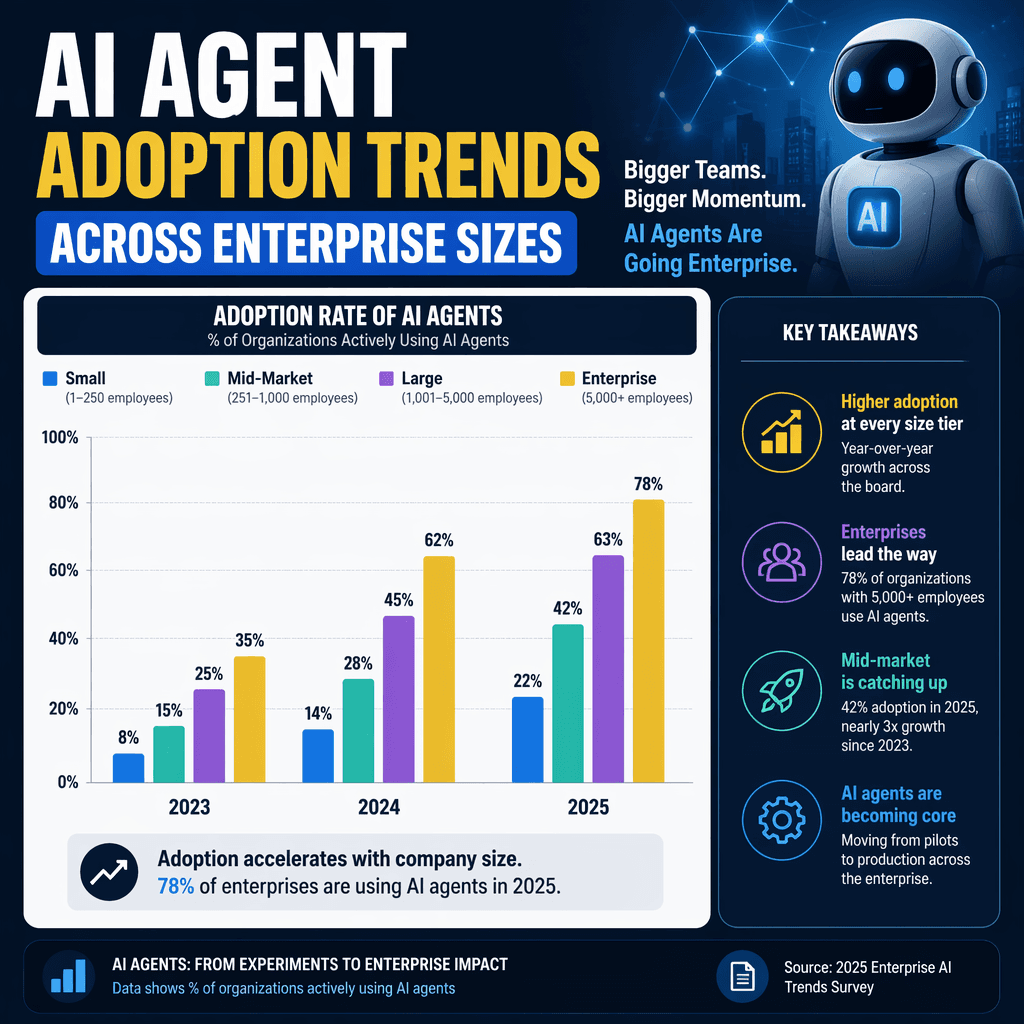

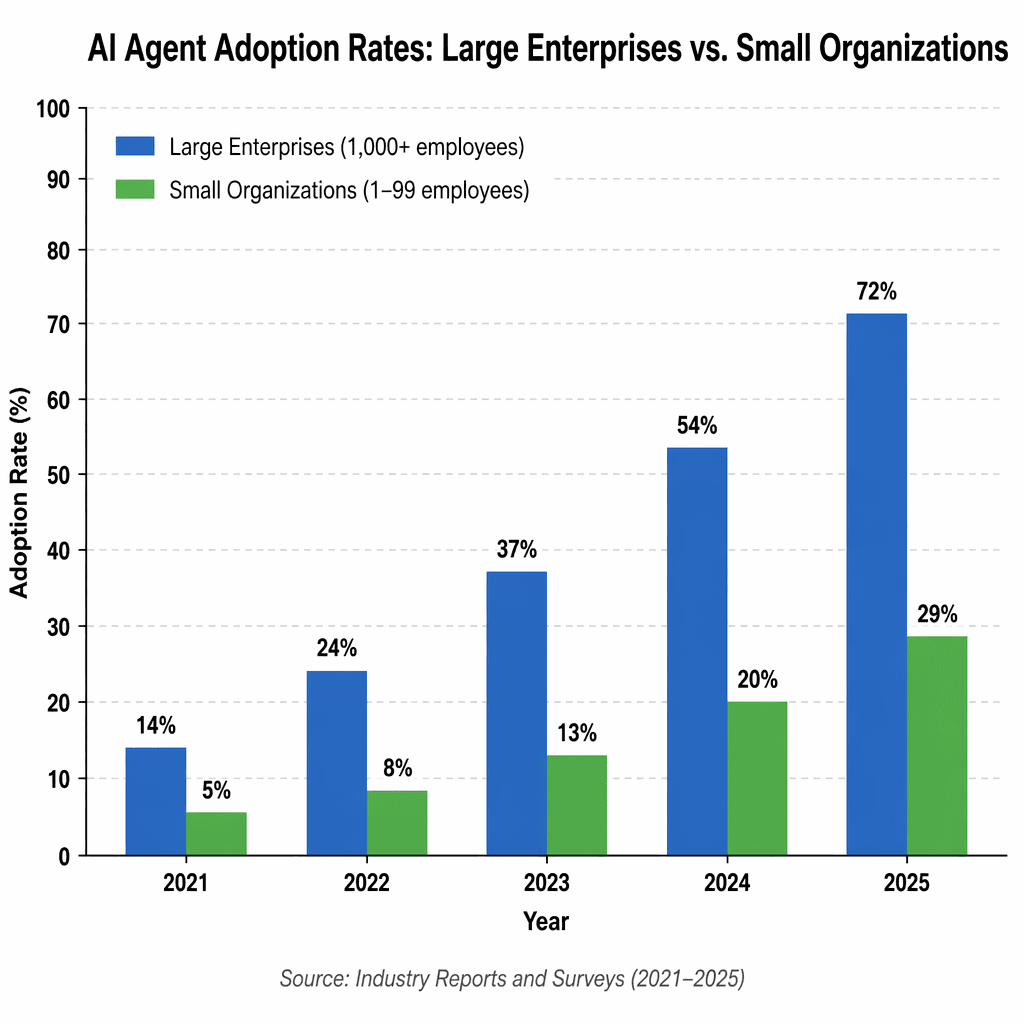

The survey found that 57% of respondents have AI agents in production, with large enterprises leading adoption. Among organizations with over 10,000 employees, 67% have agents deployed, compared to 50% for smaller organizations. This disparity reflects the resources larger enterprises can allocate to platform teams, security, and reliability infrastructure.

Interestingly, 30% of respondents are actively developing agents with plans for production. This signals a shift from proof-of-concept projects to scalable deployments. For smaller organizations, the focus remains on overcoming initial hurdles, while larger enterprises are optimizing for durability and efficiency.

Leading Use Cases: Customer Service and Research Dominate

Customer service emerged as the most common use case, accounting for 26.5% of deployments. Research and data analysis followed closely at 24.4%. These use cases highlight the dual focus of AI agents: enhancing customer interactions and accelerating internal knowledge work. Notably, internal workflow automation also gained traction, representing 18% of deployments.

For larger enterprises, internal productivity leads as the top use case, with customer service and research following. This suggests that big organizations prioritize efficiency gains across teams before deploying agents externally.

Barriers to Production: Quality and Latency

Quality remains the top barrier to production, cited by 32% of respondents. This includes challenges like accuracy, relevance, and consistency. Latency emerged as the second-largest concern, reflecting the importance of response times in customer-facing applications. Interestingly, cost concerns have diminished, likely due to falling model prices and improved efficiency.

For enterprises, security is a significant blocker, cited by 24.9% of respondents. This underscores the need for robust safeguards when deploying agents at scale. Additionally, hallucinations and context management were frequently mentioned as ongoing challenges.

Observability: A Non-Negotiable Requirement

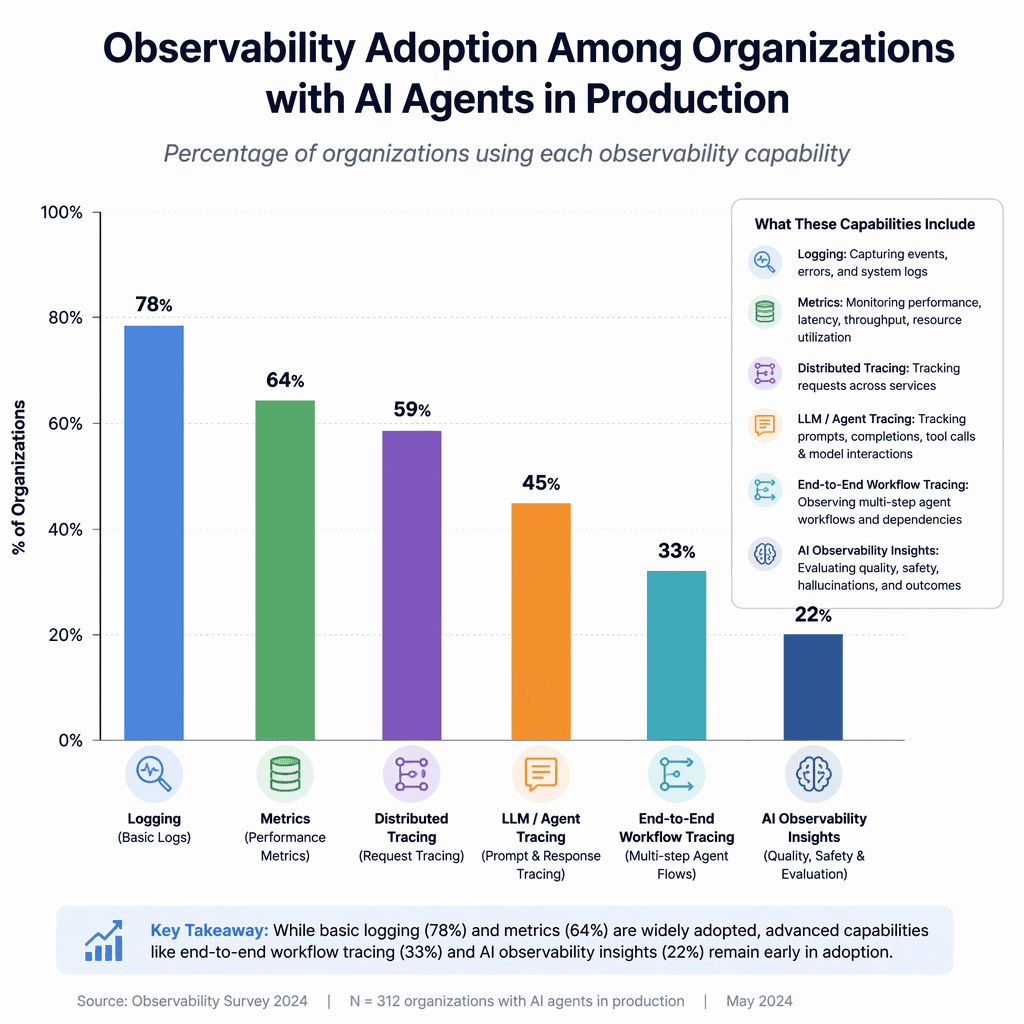

Observability has become table stakes for AI agents. Nearly 89% of organizations have implemented some form of observability, with 62% enabling detailed tracing of agent steps and tool calls. Among those with agents in production, adoption is even higher-94% have observability systems, and 71.5% offer full tracing capabilities.

This level of visibility is critical for debugging failures, optimizing performance, and building trust. Without it, teams struggle to refine agent behavior or ensure reliability in complex workflows.

Key Takeaways

- Large enterprises are driving AI agent adoption, with 67% having agents in production.

- Customer service and research are the leading use cases, highlighting external and internal applications.

- Quality and latency are the biggest barriers to production, while cost concerns are declining.

- Observability is essential for debugging, optimizing, and scaling AI agents.

- Security and context management are critical challenges for large-scale deployments.

"Without visibility into how an agent reasons and acts, teams can’t reliably debug failures, optimize performance, or build trust."

Builder note

When implementing observability, prioritize systems that allow detailed tracing of agent reasoning and tool calls. This will enable faster debugging and iterative improvements.

Source Card

State of AI AgentsLangChain's survey provides actionable insights into AI agent adoption, barriers, and engineering practices.

LangChain

| Signal | Why it matters |

|---|---|

| Large enterprises lead adoption | They have the resources to scale agents effectively. |

| Customer service is the top use case | Agents are increasingly customer-facing. |

| Quality is the biggest barrier | Accuracy and consistency are critical for trust. |

| Observability is widely adopted | Teams need visibility to debug and optimize agents. |

- Assess your organization's readiness for AI agent adoption.

- Prioritize observability systems for debugging and optimization.

- Focus on quality improvements to overcome production barriers.

- Consider latency tradeoffs in customer-facing applications.

- Address security concerns for large-scale deployments.

- Customer service agents enhance user interactions.

- Research agents accelerate knowledge synthesis.

- Internal workflow automation boosts team efficiency.

- Observability systems enable detailed tracing.

- Security safeguards are critical for enterprise-scale agents.

- LangChain's survey: https://www.langchain.com/state-of-agent-engineering

Practical Guidance for Builders

For engineers and operators, the survey underscores the importance of iterative development and robust infrastructure. Start with clear use cases that align with organizational goals, such as customer service or internal automation. Invest in observability systems early to ensure visibility into agent behavior.

When scaling, prioritize quality improvements to address accuracy and consistency. For latency-sensitive applications, explore tradeoffs between response time and output quality. Finally, implement security measures to safeguard sensitive data and workflows.

Conclusion

AI agents are transforming enterprise operations, but scaling them requires careful engineering. By focusing on observability, quality, and security, organizations can overcome barriers and unlock the full potential of AI agents. LangChain's survey provides a roadmap for builders navigating this dynamic landscape.

Builder implications

For teams evaluating State of AI Agent Engineering: Insights and Practical Guidance, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from langchain.com gives builders a concrete signal to inspect: State of AI Agents - langchain.com. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agents, observability, enterprise adoption, agent engineering already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.