The landscape of open-source AI agent frameworks has evolved significantly by 2026, offering engineers, founders, and operators a diverse toolkit to automate workflows, build SaaS products, and streamline development pipelines. While the allure of 'earning while you sleep' persists in popular discourse, the reality is that these frameworks are best leveraged for building robust infrastructure rather than chasing speculative trading bots. This article explores the top frameworks, their engineering tradeoffs, and practical adoption strategies.

Top Frameworks of 2026: A Snapshot

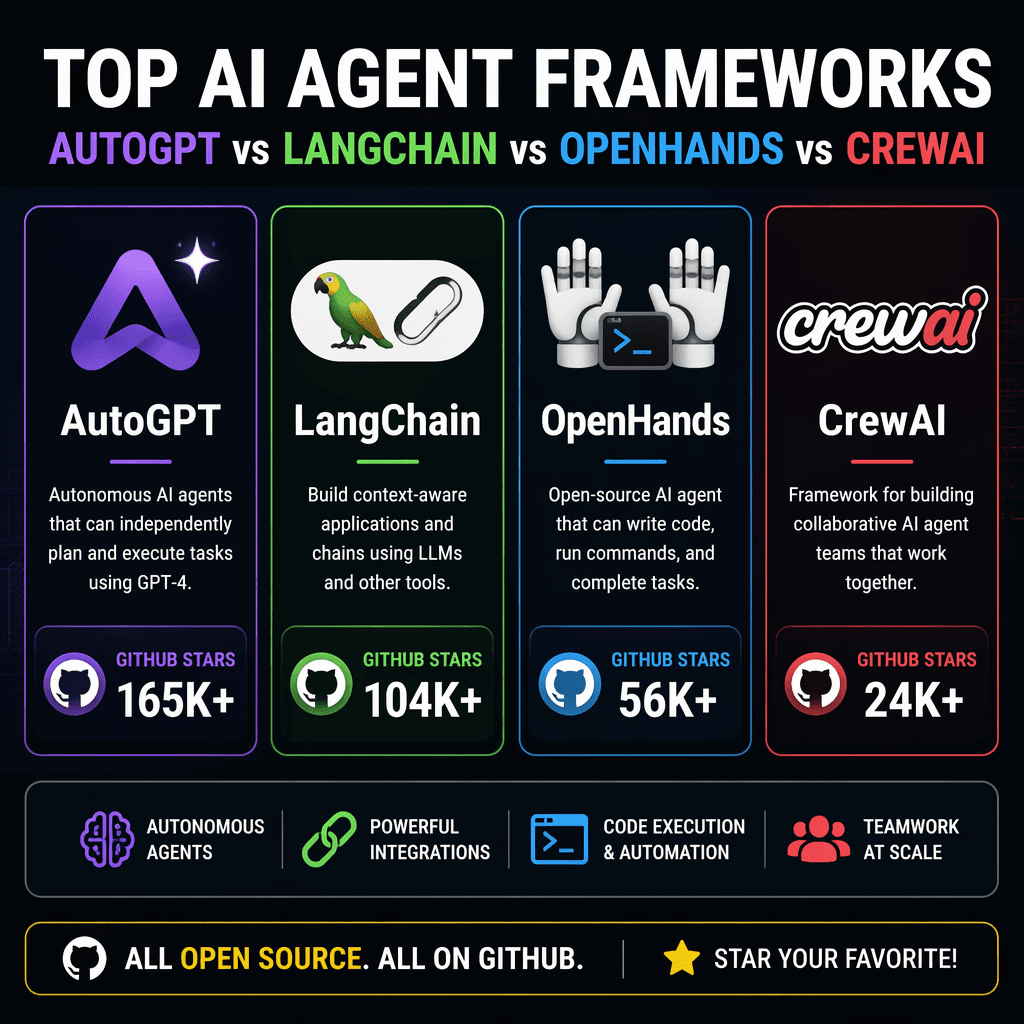

The ten most relevant open-source AI agent frameworks of 2026 include AutoGPT, LangChain, OpenHands, CrewAI, AutoGen, MetaGPT, ByteDance Deer-Flow, Cline, GPT-Engineer, and Aider. These frameworks have been verified via the GitHub API, with star counts reflecting their popularity and active community engagement. Each framework offers unique capabilities, from advanced prompt chaining to modular agent design, making them suitable for varied use cases.

Key Takeaways

- AutoGPT leads with 184,000 GitHub stars, emphasizing autonomous task execution.

- LangChain (LangGraph) excels in composable workflows with 135,000 stars.

- OpenHands focuses on accessibility and modularity, boasting 72,100 stars.

- CrewAI and AutoGen are gaining traction for enterprise-grade automation.

None of these frameworks generate income automatically. They are tools to build products and automate workflows, not plug-and-play profit machines.

Builder note

When evaluating frameworks, prioritize alignment with your project’s technical requirements and scalability needs. Avoid overengineering by focusing on modularity and community support.

Source Card

10 Open-Source AI Agent Frameworks to Automate Your Work in 2026This source provides a verified roundup of the most popular AI agent frameworks, emphasizing practical applications over speculative use cases.

Pasquale Pillitteri

| Framework | Why it matters |

|---|---|

| AutoGPT | Autonomous task execution with high configurability. |

| LangChain | Composable workflows for complex automation. |

| OpenHands | Accessibility and modular design for diverse use cases. |

| CrewAI | Enterprise-grade automation with scalability. |

| AutoGen | Microsoft-backed framework for robust agent orchestration. |

Tradeoffs and Engineering Considerations

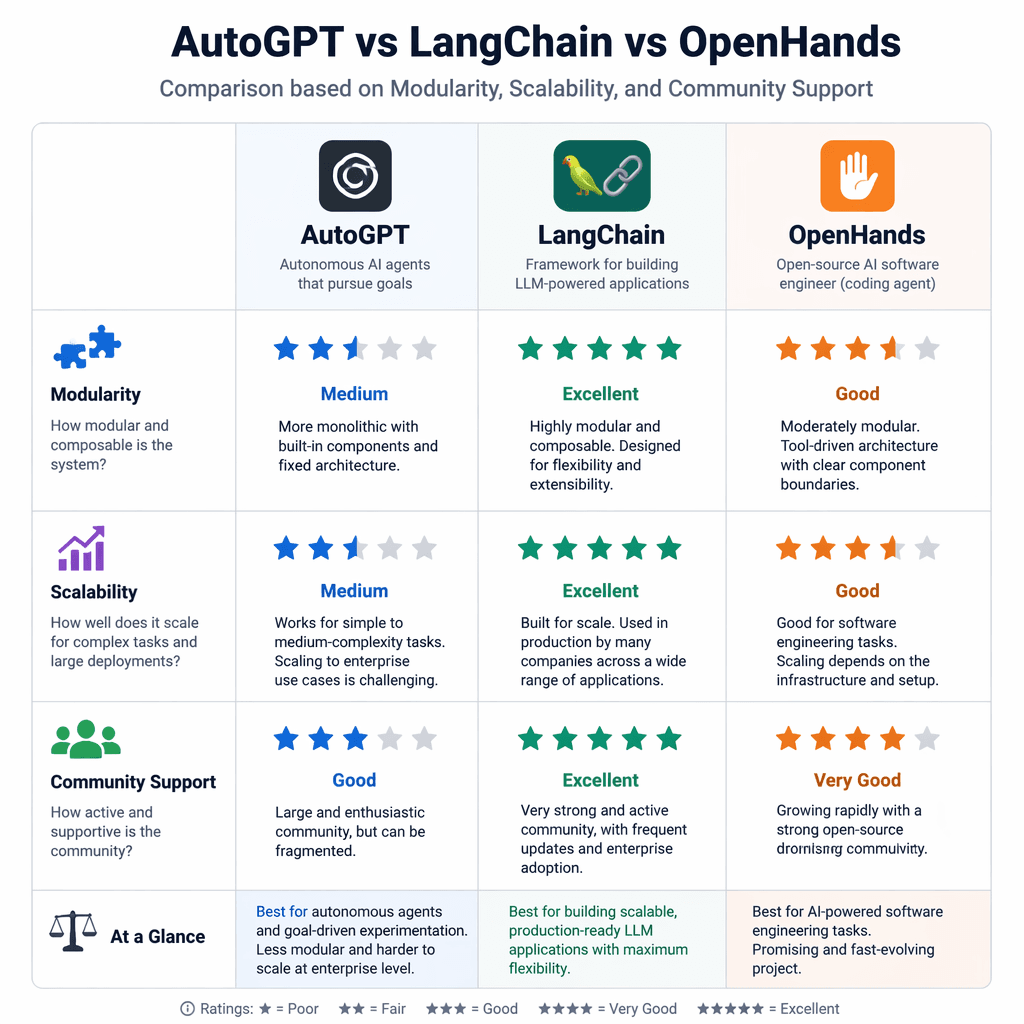

Each framework comes with its own set of tradeoffs. AutoGPT, for instance, excels in autonomous task execution but requires significant computational resources, making it less suitable for lightweight applications. LangChain offers composability but demands careful orchestration to avoid complexity creep. OpenHands prioritizes modularity, which can simplify development but may limit advanced integrations. Engineers should weigh these factors against project goals and infrastructure constraints.

- Define your automation goals clearly before selecting a framework.

- Evaluate community support and documentation quality for long-term viability.

- Test frameworks in isolated environments to assess performance under load.

- Prioritize modularity to enable future scalability and integration.

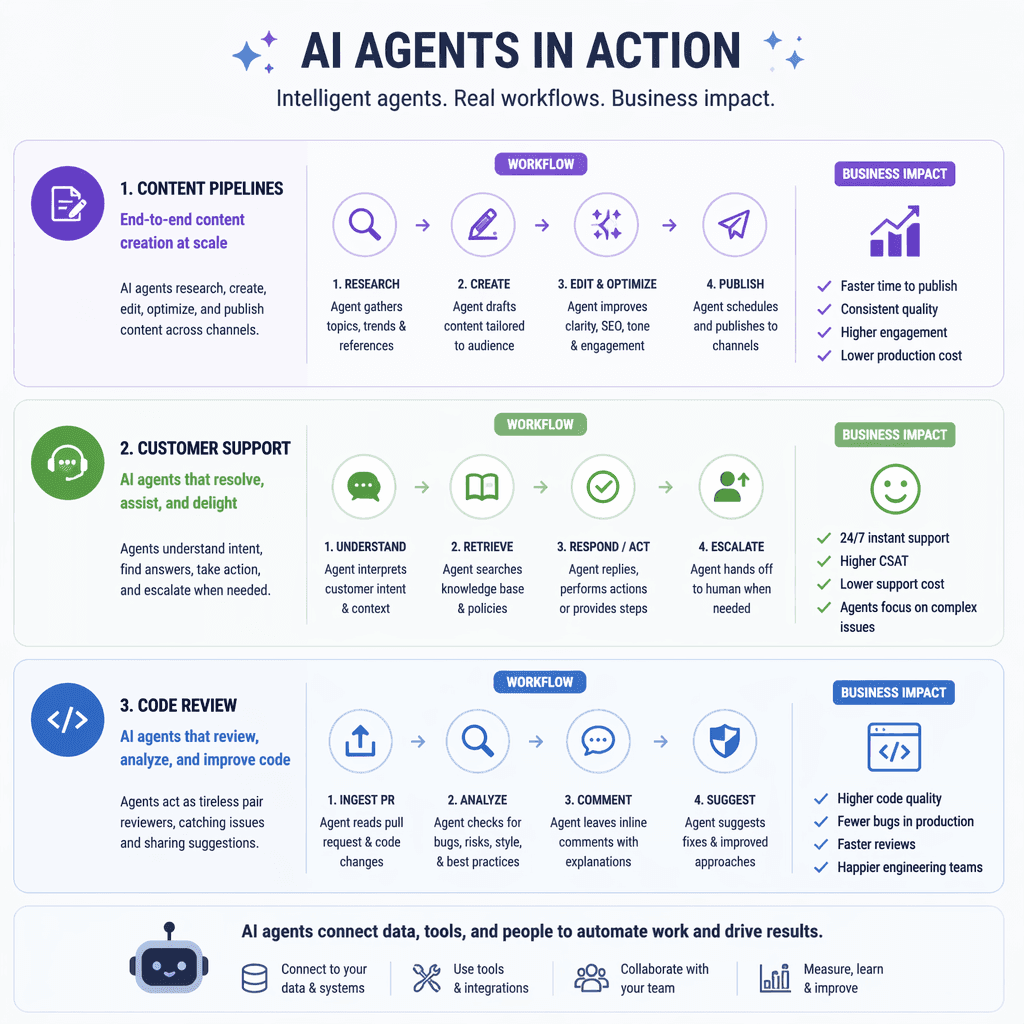

Real-World Use Cases

Open-source AI agent frameworks are being deployed across industries to solve practical problems. Common use cases include industrial-scale content generation, automated customer support, code review for private repositories, and assisted document creation. For example, LangChain is often used to build content pipelines, while AutoGen excels in automating repetitive enterprise workflows.

- Industrial content pipelines: Automate blog generation and SEO optimization.

- Customer support: Deploy agents for 24/7 query resolution.

- Code review: Streamline pull request analysis for private repositories.

- Document generation: Create contracts and reports with minimal manual input.

How to Choose the Right Framework

Selecting the right framework depends on your project’s scope, technical requirements, and resource availability. AutoGPT is ideal for autonomous workflows, while LangChain suits projects requiring composable logic. OpenHands is a strong choice for modular, accessible solutions. Engineers should also consider factors like community activity, extensibility, and compatibility with existing infrastructure.

- Pasquale Pillitteri, '10 Open-Source AI Agent Frameworks to Automate Your Work in 2026,' https://pasqualepillitteri.it/en/news/1476/10-open-source-ai-agent-frameworks-2026

Builder implications

For teams evaluating Navigating Open-Source AI Agent Frameworks: Engineering Insights for 2026, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from pasqualepillitteri.it gives builders a concrete signal to inspect: 10 Open-Source AI Agent Frameworks to Automate Your Work in 2026. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agent frameworks, open-source tools, AutoGPT, LangChain already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.