As AI agents become more complex and widely deployed, the need for robust observability frameworks has grown exponentially. Observability goes beyond traditional logging by providing structured traces that capture the decision-making processes of agents, enabling engineers to pinpoint failures, optimize workflows, and systematically improve performance. This article explores the latest advancements in agent observability, focusing on tracing, testing, and iterative improvement.

Why Observability Matters for AI Agents

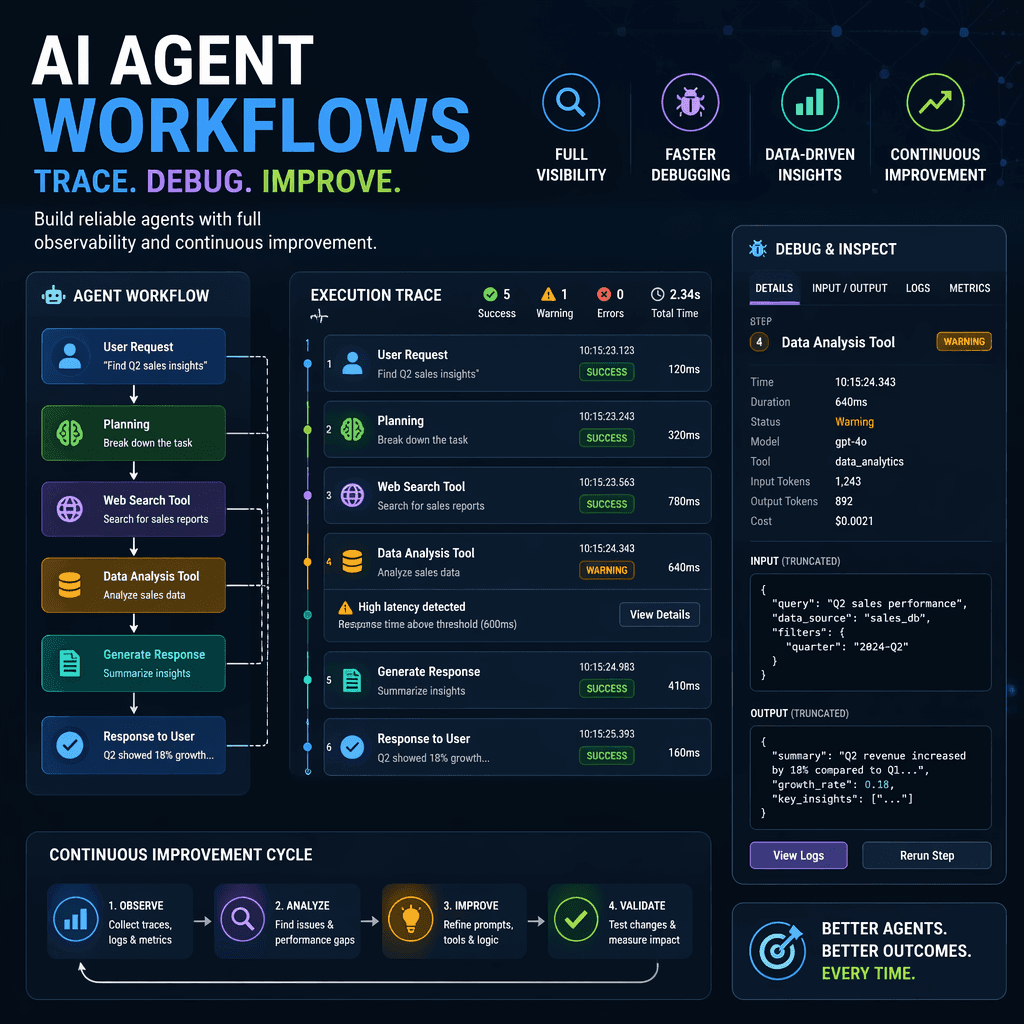

AI agents are inherently non-deterministic, meaning the same input can lead to different outputs depending on the context, tool calls, and reasoning paths. Traditional debugging methods, such as print statements and basic logs, fall short in capturing this complexity. Observability frameworks address this gap by providing step-by-step visibility into agent execution, allowing engineers to trace failures to their root causes and understand why an agent behaved a certain way.

Key Takeaways

- Observability enables systematic debugging by capturing structured traces of agent workflows.

- Tracing helps localize failures in multi-step processes, such as tool calls and reasoning loops.

- AI-assisted debugging accelerates root cause analysis by allowing engineers to query traces in natural language.

Observability transforms debugging from reactive guesswork into proactive improvement cycles.

Builder note

When scaling AI agents to production, observability becomes a critical feature for maintaining reliability, optimizing costs, and meeting SLAs.

Source Card

AI Agent Observability: Tracing, Testing, and Improving AgentsThis source provides a detailed overview of observability practices for AI agents, including tracing, testing, and debugging workflows.

LangChain

| Signal | Why it matters |

|---|---|

| Tool call latency | Identifies bottlenecks in execution workflows. |

| Token usage per step | Helps optimize costs and detect inefficiencies. |

| Reasoning divergence | Pinpoints where the agent deviates from expected behavior. |

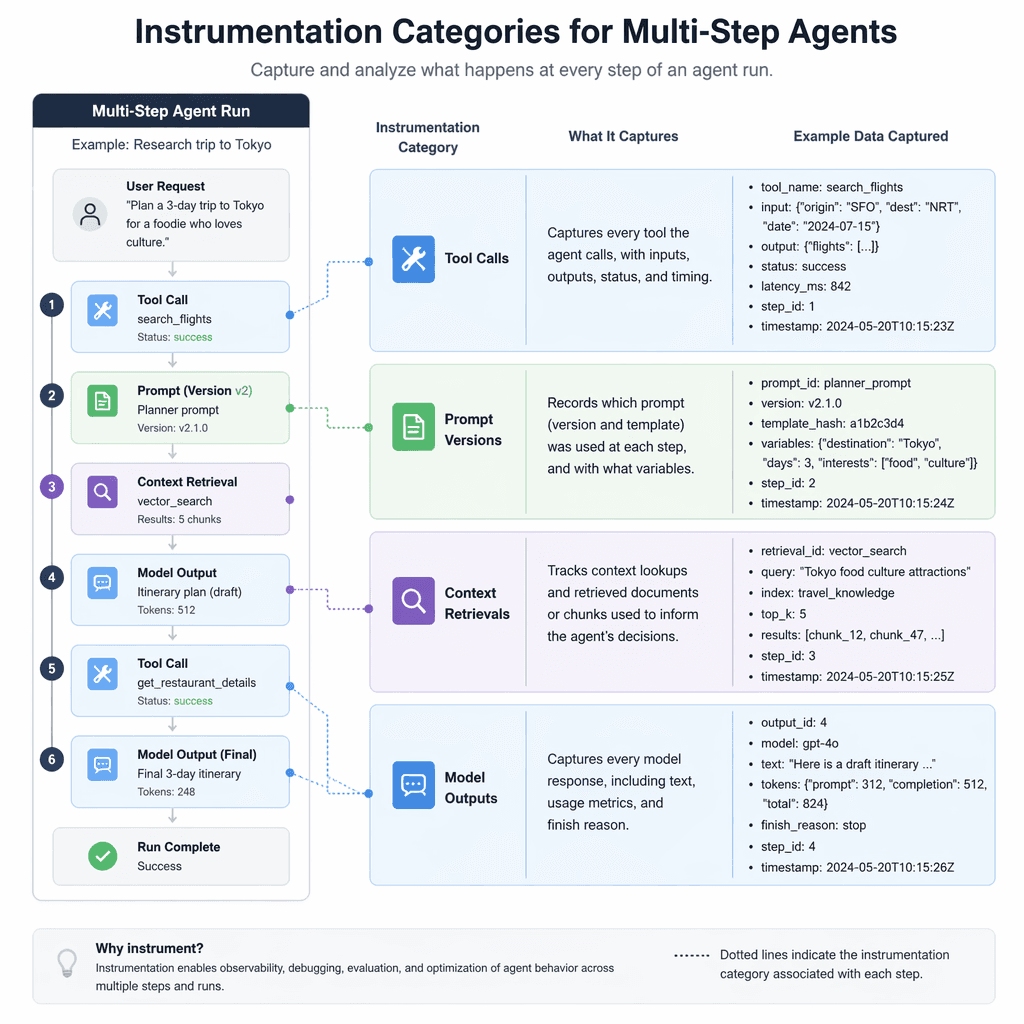

Instrumentation for Multi-Step Agents

Effective instrumentation is crucial for debugging multi-step agents. Engineers should focus on capturing tool calls, prompt versions, context retrievals, and model outputs as structured traces. These traces provide granular visibility into each step of the agent's workflow, enabling systematic analysis and improvement. For example, if a retrieval step consistently returns irrelevant documents, traces can help identify whether the issue lies in the query formulation or the retrieval system itself.

- Instrument tool calls to monitor latency and success rates.

- Track prompt versions to evaluate the impact of changes on agent behavior.

- Capture context retrievals to ensure relevant data is being fetched.

- Log model outputs to analyze reasoning paths and detect hallucinations.

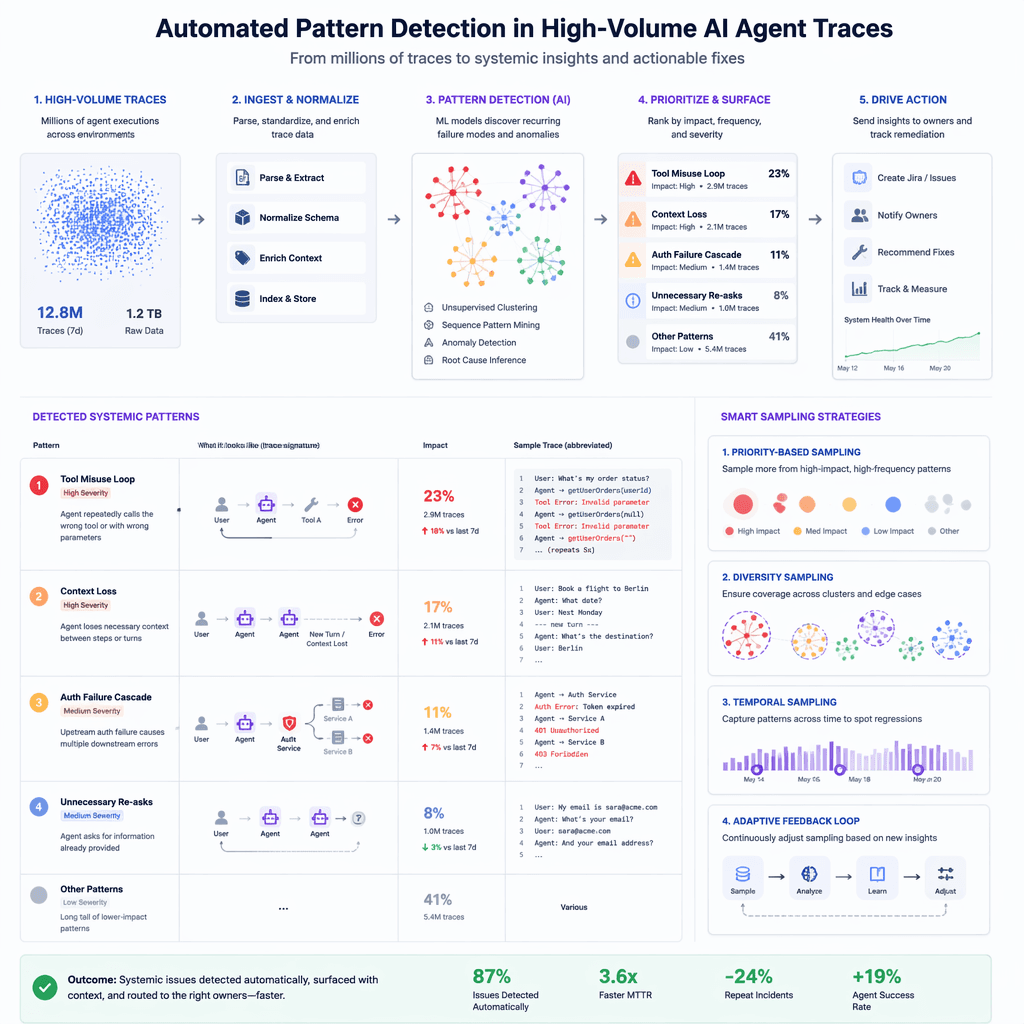

Scaling Observability for Production

As agents scale to production environments, observability infrastructure must evolve to handle increased trace volume. Automated pattern detection becomes essential for identifying systemic issues, such as recurring retrieval failures or tool call sequences that correlate with errors. Sampling strategies and retention policies help manage storage costs while preserving critical debugging data. At scale, observability shifts from an operational nice-to-have to a foundational capability for maintaining reliability and performance.

- Automated pattern detection identifies systemic issues in high-volume traces.

- Sampling strategies prioritize traces for deep analysis.

- Retention policies balance storage costs with debugging needs.

AI-Assisted Debugging with Natural Language Queries

AI-assisted debugging tools allow engineers to query traces in natural language, streamlining root cause analysis. For example, an engineer can ask, 'Why did the agent fail to retrieve relevant documents?' and receive a detailed explanation based on trace data. This capability reduces the time spent manually reviewing logs and accelerates troubleshooting workflows, making it easier to turn observations into actionable improvements.

Adoption Guidance and Tradeoffs

While observability offers significant benefits, it also introduces tradeoffs in terms of complexity and cost. Engineers should evaluate whether their application warrants full tracing based on factors such as workflow complexity, request volume, and debugging requirements. For single-turn chains with direct input-output relationships, basic logging may suffice. However, for multi-step agents or high-volume applications, the investment in observability infrastructure pays off by enabling systematic improvement and cost optimization.

- LangChain: AI Agent Observability - https://www.langchain.com/articles/agent-observability

- State of Agent Engineering Report - https://www.langchain.com/state-of-agent-engineering

Builder implications

For teams evaluating Engineering Observability for AI Agents: Tracing, Testing, and Iterative Improvement, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from langchain.com gives builders a concrete signal to inspect: AI Agent Observability: Tracing, Testing, and Improving Agents. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI observability, agent debugging, tracing tools, LangChain already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.