When building AI agents for enterprise use, the transition from prototype to production often reveals critical gaps in reliability, governance, and scalability. While many frameworks excel at creating impressive demos, few are equipped to handle the complexities of real-world traffic, multi-step workflows, and regulatory scrutiny. This article evaluates eight leading AI agent frameworks based on their production readiness, orchestration capabilities, and suitability for regulated industries.

Why Production Readiness Matters

Engineering teams frequently encounter challenges when deploying AI agents in production. Issues such as tool call failures, lack of observability, and unpredictable agent behavior can derail projects. For regulated industries like finance, healthcare, and telecommunications, these challenges are compounded by the need for stringent governance and auditability. Selecting the right framework is critical to ensuring agents remain reliable under real-world conditions.

Evaluation Criteria for AI Agent Frameworks

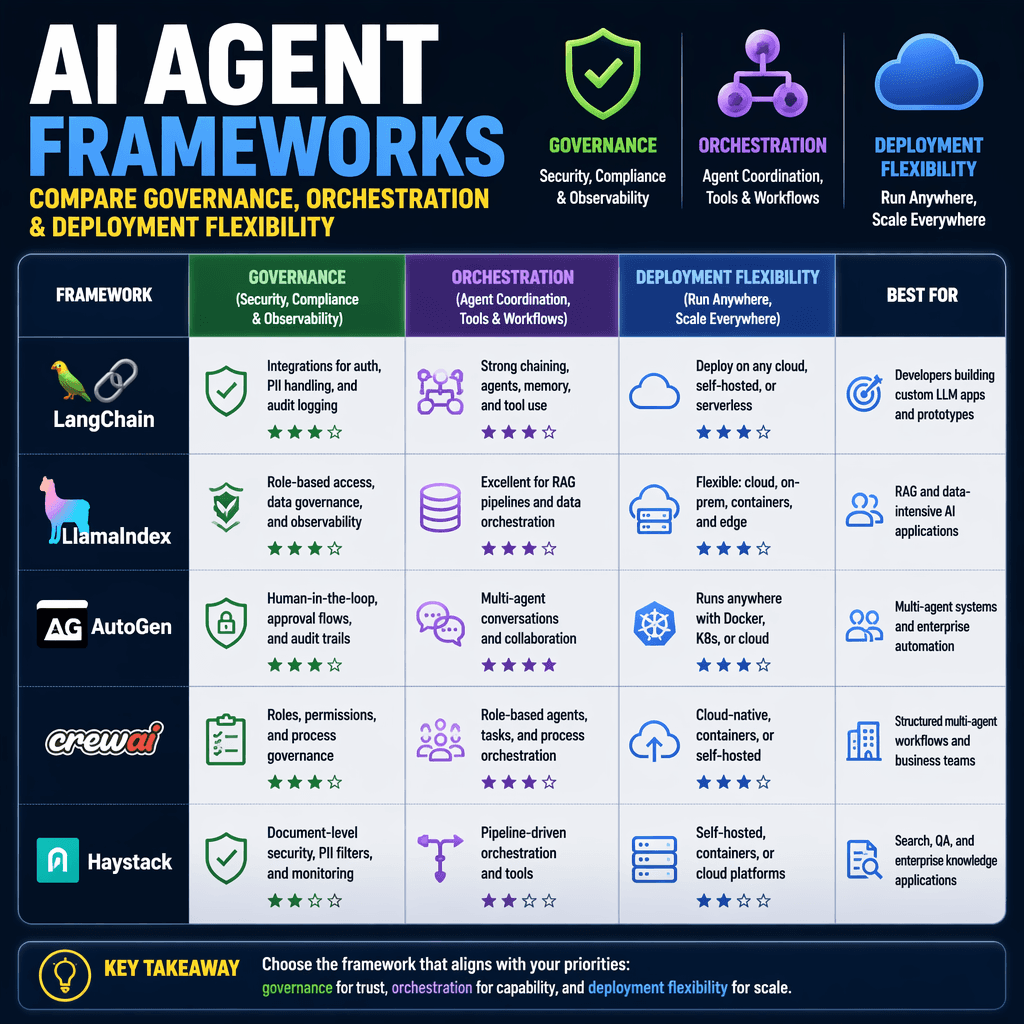

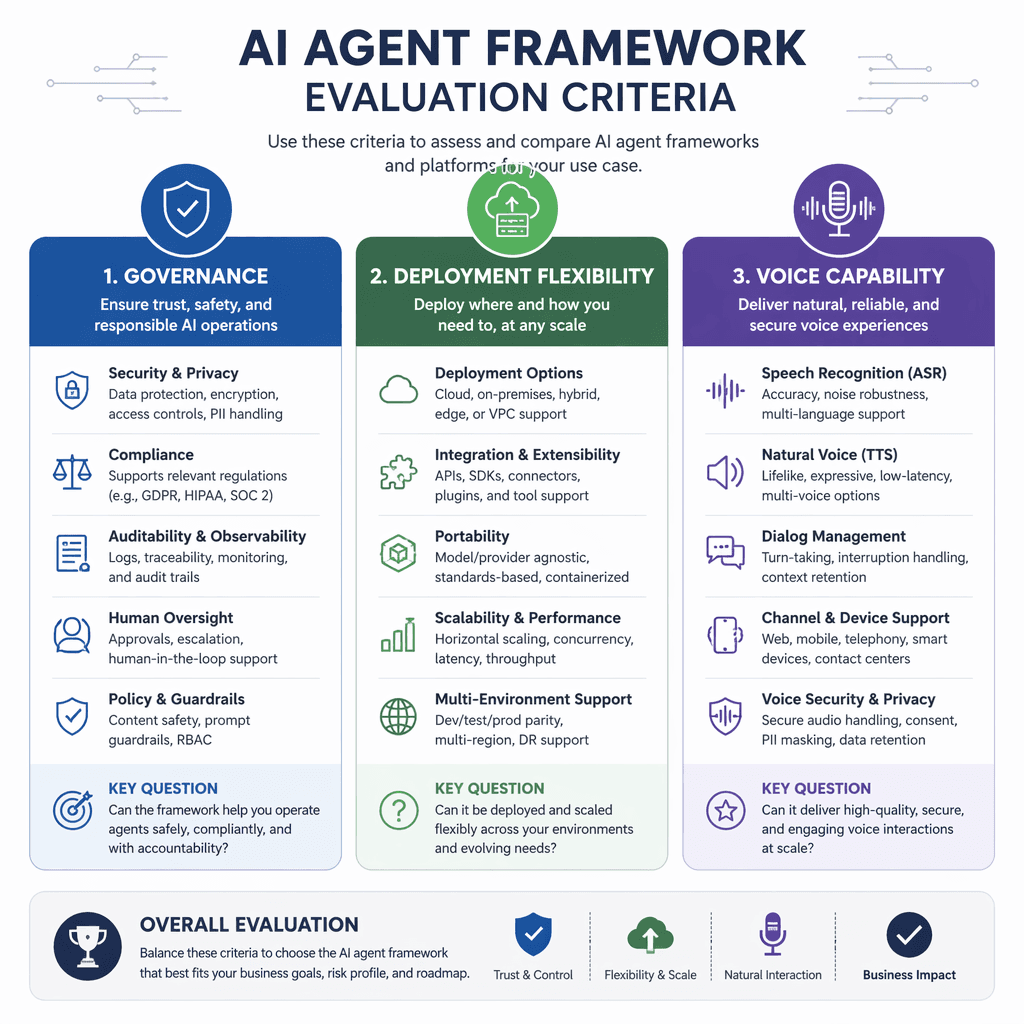

Our evaluation focused on seven key dimensions: orchestration architecture, governance controls, deployment flexibility, extensibility, voice capability, pricing, and community support. These criteria were weighted to prioritize frameworks that meet the needs of enterprise teams operating in regulated environments.

| Criterion | Weight | What We Measured |

|---|---|---|

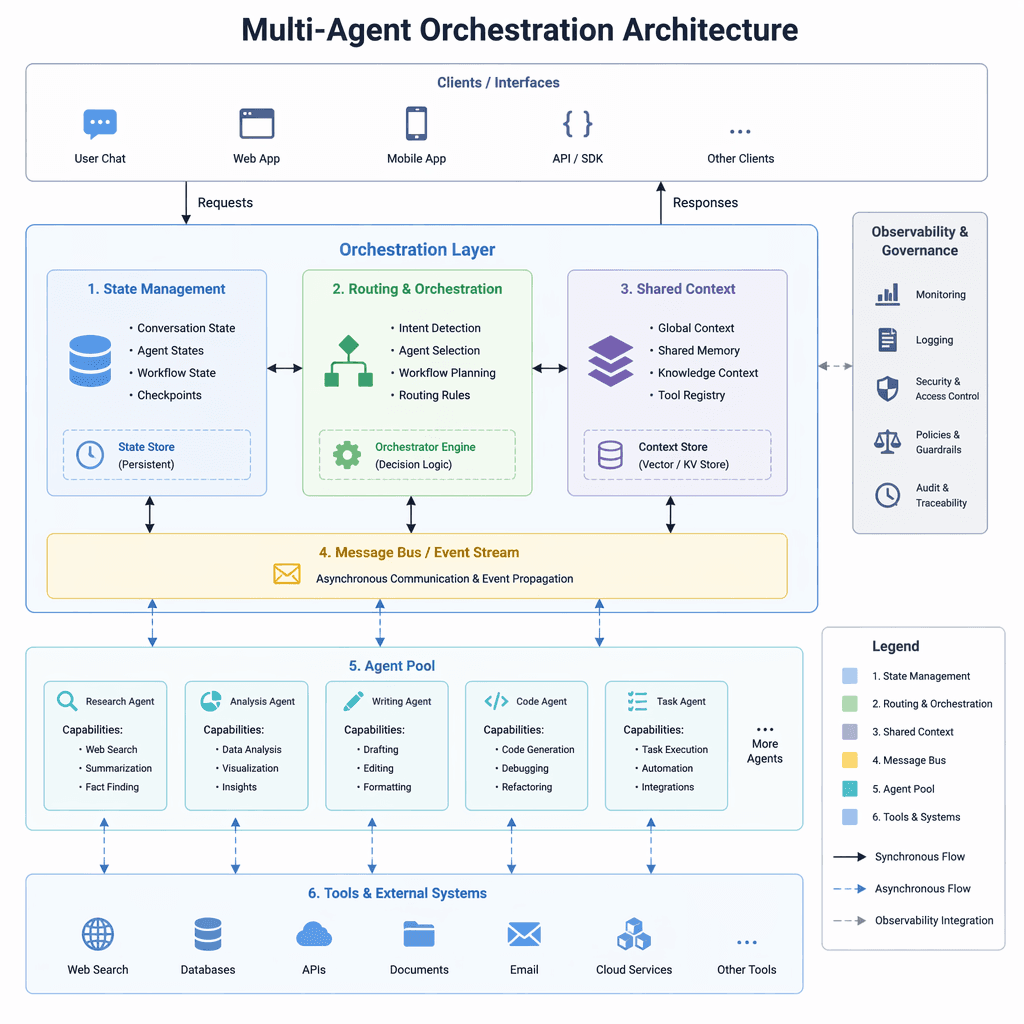

| Orchestration & Multi-Agent Architecture | 20% | Cross-skill coordination, state management, multi-agent routing, shared context |

| LLM Governance & Constraint Controls | 20% | Deterministic business logic, policy enforcement, hallucination prevention, audit trails |

| Deployment Flexibility & Data Sovereignty | 15% | Self-hosted, private cloud, on-premise options; data residency controls |

| Extensibility & Code-Level Control | 15% | Custom modules, MCP/A2A support, Action Server, replaceable engine components |

| Voice & Multi-Channel Capability | 10% | Production voice quality, cross-channel continuity, latency, concurrent call handling |

| Pricing & Total Cost of Ownership | 10% | Transparent pricing, billing model predictability, hidden costs, scaling economics |

| Community, Support & Documentation | 10% | Capterra/G2 ratings, support responsiveness, documentation quality, community activity |

Top Frameworks for Enterprise AI Agents

Among the eight frameworks evaluated, Rasa CALM emerged as the best overall choice for enterprise teams. It excels in governance, deployment flexibility, and voice capability, making it particularly suited for regulated industries. Other notable frameworks include LangChain for prototyping and integrations, CrewAI for multi-agent collaboration, and Vellum for low-code/no-code solutions.

Key Takeaways

- Rasa CALM is the top choice for enterprise teams needing production-grade orchestration and governance.

- LangChain offers flexibility for prototyping and integrations but lacks enterprise-grade governance.

- CrewAI specializes in multi-agent collaboration, making it ideal for complex workflows.

- Vellum provides low-code/no-code options, simplifying deployment for non-technical teams.

The best AI agent framework is not the one that builds the most impressive demo. It’s the one that stays reliable when complexity, traffic, and regulatory scrutiny increase.

Builder note

When evaluating frameworks, prioritize production readiness over demo-day performance. Look for features like deterministic business logic, audit trails, and deployment flexibility.

Source Card

8 Best AI Agent Frameworks for Enterprise in 2026This source provides a detailed comparison of AI agent frameworks, focusing on their suitability for enterprise production environments.

Rasa Blog

| Signal | Why it matters |

|---|---|

| Self-hosted deployment | Ensures data sovereignty and compliance in regulated industries. |

| Multi-agent orchestration | Supports complex workflows and cross-skill coordination. |

| Voice capability | Enables seamless integration across voice and digital channels. |

| Extensibility | Allows customization at the engine level for unique enterprise needs. |

- Evaluate frameworks based on production readiness, not demo performance.

- Prioritize governance features like audit trails and deterministic logic.

- Consider deployment flexibility for data sovereignty and compliance.

- Assess voice capabilities for multi-channel continuity.

- Rasa CALM: Best for enterprise production.

- LangChain: Ideal for prototyping and integrations.

- CrewAI: Specializes in multi-agent collaboration.

- Vellum: Simplifies deployment with low-code/no-code options.

- https://rasa.com/blog/best-ai-agent-framework

Builder implications

For teams evaluating Engineering AI Agents: Evaluating Frameworks for Enterprise Production, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from rasa.com gives builders a concrete signal to inspect: 8 Best AI Agent Frameworks for Enterprise in 2026 | Rasa Blog. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agent frameworks, enterprise AI, production readiness, governance already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.