AI agents are no longer just experimental prototypes; they are becoming integral to production systems across industries. However, deploying these agents requires a shift in engineering practices to account for their autonomous reasoning, adaptive behavior, and complex orchestration needs. This article explores the latest frameworks and methodologies for building production-ready AI agents, based on insights from Google Cloud's engineering team.

Core Architecture of AI Agents

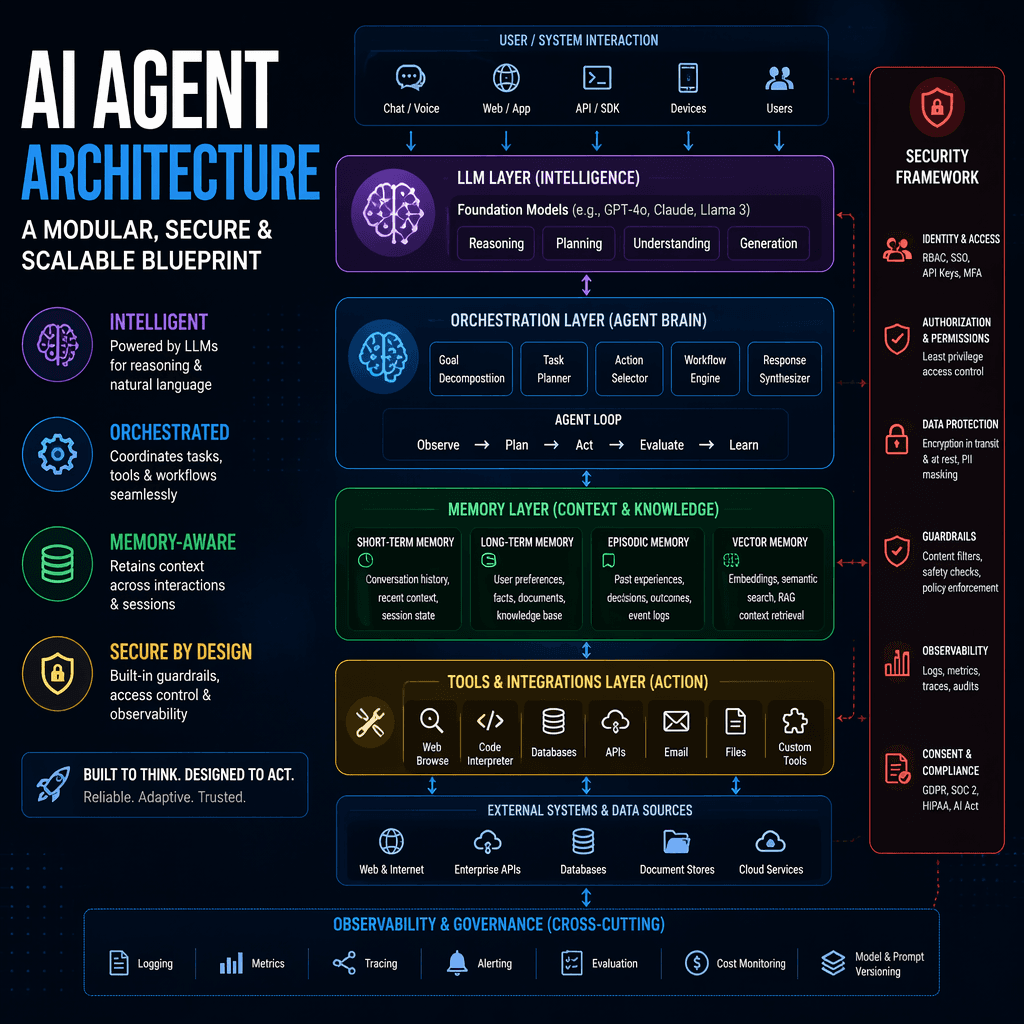

At the heart of an AI agent is a large language model (LLM) that serves as its cognitive engine. This model enables the agent to understand tasks, generate responses, and make decisions based on context. Surrounding the LLM is an orchestration layer that manages communication, data flow, and tool integration. Key components include short-term memory for session state, long-term memory for persistent recall, retrieval-augmented generation (RAG) for accessing external knowledge, and modules for executing actions. A robust security framework ensures safe and ethical operation.

Key Takeaways

- AI agents require specialized architectures to manage reasoning, memory, and tool integration.

- The orchestration layer acts as the nervous system, coordinating data flow and external interactions.

- Security frameworks are essential to ensure safe and trustworthy agent behavior.

Agents follow a recursive loop: Think, Act, Observe. Each cycle refines their approach and adapts to new contexts.

Interoperability Protocols: MCP and A2A

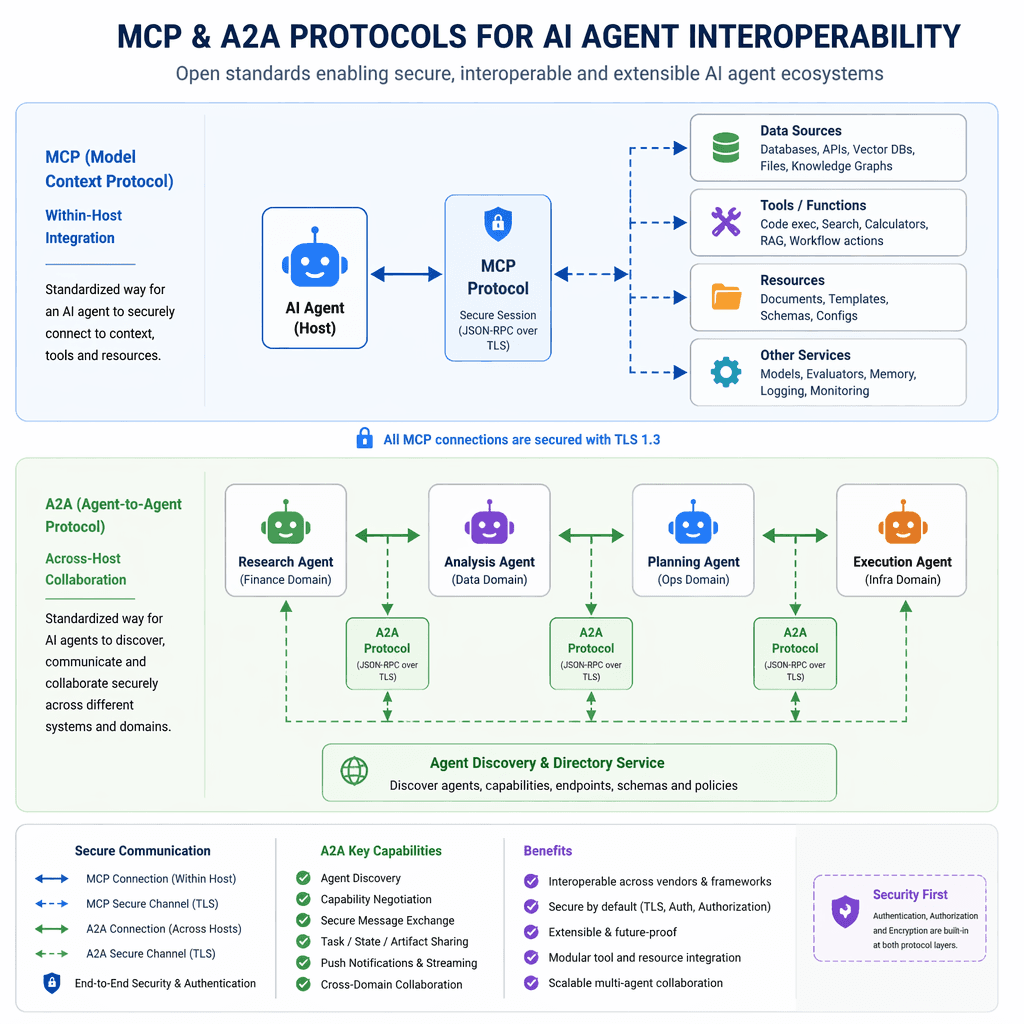

For agents to operate effectively in complex ecosystems, interoperability is crucial. Two emerging protocols address this need: Anthropic's Model Context Protocol (MCP) and Google's Agent2Agent Protocol (A2A). MCP standardizes connections between agents and external tools, simplifying development and enhancing compatibility. A2A enables direct communication between agents, allowing them to discover capabilities, negotiate interactions, and collaborate securely. These protocols lay the foundation for interconnected agent ecosystems.

Builder note

Adopting MCP and A2A can significantly reduce integration complexity and improve scalability in multi-agent systems.

Source Card

A dev's guide to production-ready AI agentsThis source provides foundational insights into the engineering challenges and solutions for deploying AI agents in production environments.

Google Cloud Blog

| Signal | Why it matters |

|---|---|

| Session management | Maintains context across interactions for coherent agent behavior. |

| Persistent memory systems | Enables long-term recall and personalization. |

| Tool integration | Expands agent capabilities through external services. |

| Real-time logging | Facilitates debugging and decision tracing. |

Context Engineering: Feeding the Brain

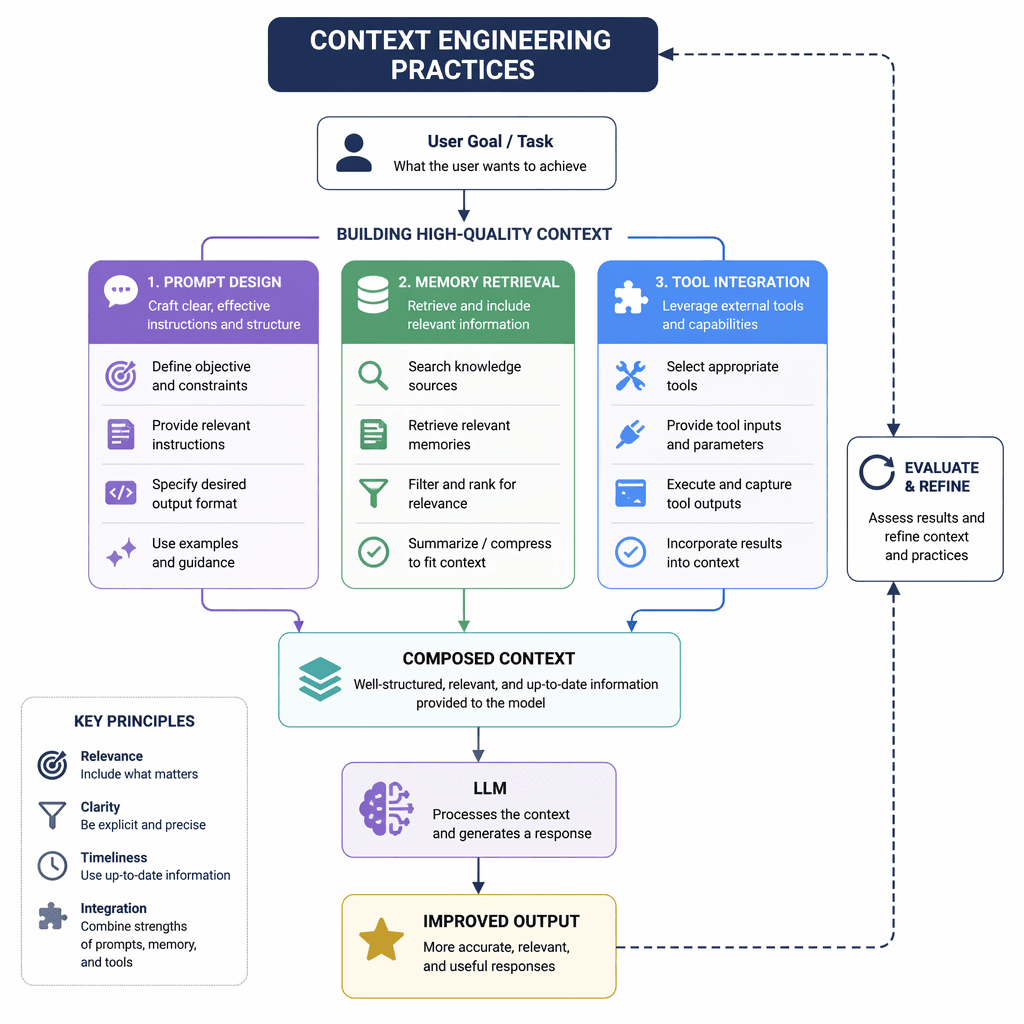

Context engineering is the practice of providing the LLM with the right information at the right time. This involves designing prompts, selecting tools, retrieving relevant memories, and maintaining conversation history. Effective context engineering transforms a generic model into a personalized assistant, ensuring coherent and helpful interactions. Without it, agents risk forgetting, repeating themselves, or misunderstanding user intent.

- Design prompts that align with the agent's objectives.

- Implement retrieval mechanisms for relevant data.

- Integrate tools that enhance agent functionality.

- Maintain session history for continuity across interactions.

Testing and Evaluation: Ensuring Quality

Testing AI agents requires new methodologies that focus on trajectories rather than static outputs. Evaluation should examine the full sequence of decisions and actions, including tool selection, reasoning quality, and error recovery. Staged rollouts, from sandbox environments to production, help validate agent behavior incrementally. Unit tests for components and trajectory analysis for multi-step processes are essential for ensuring reliability.

- Unit tests validate individual components.

- Trajectory analysis examines decision sequences.

- Staged rollouts mitigate risks during deployment.

- https://cloud.google.com/blog/products/ai-machine-learning/a-devs-guide-to-production-ready-ai-agents

- https://www.kaggle.com/whitepaper-introduction-to-agents

- https://www.kaggle.com/whitepaper-agent-tools-and-interoperability-with-mcp

- https://www.kaggle.com/whitepaper-context-engineering-sessions-and-memory

- https://www.kaggle.com/whitepaper-agent-quality

Builder implications

For teams evaluating Building Production-Ready AI Agents: Frameworks and Best Practices, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from cloud.google.com gives builders a concrete signal to inspect: A dev's guide to production-ready AI agents | Google Cloud Blog. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agents, production-ready, frameworks, interoperability already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.