AI agent frameworks are evolving rapidly, offering developers new tools and methodologies to build scalable, reliable, and context-aware systems. Recent releases highlight advancements in backend integration, retrieval-augmented generation (RAG), debugging tools, and context engineering strategies. These innovations aim to address common challenges in AI agent development, such as memory management, hallucination mitigation, and real-world deployment.

Unified Frameworks for Scalable Agent Workflows

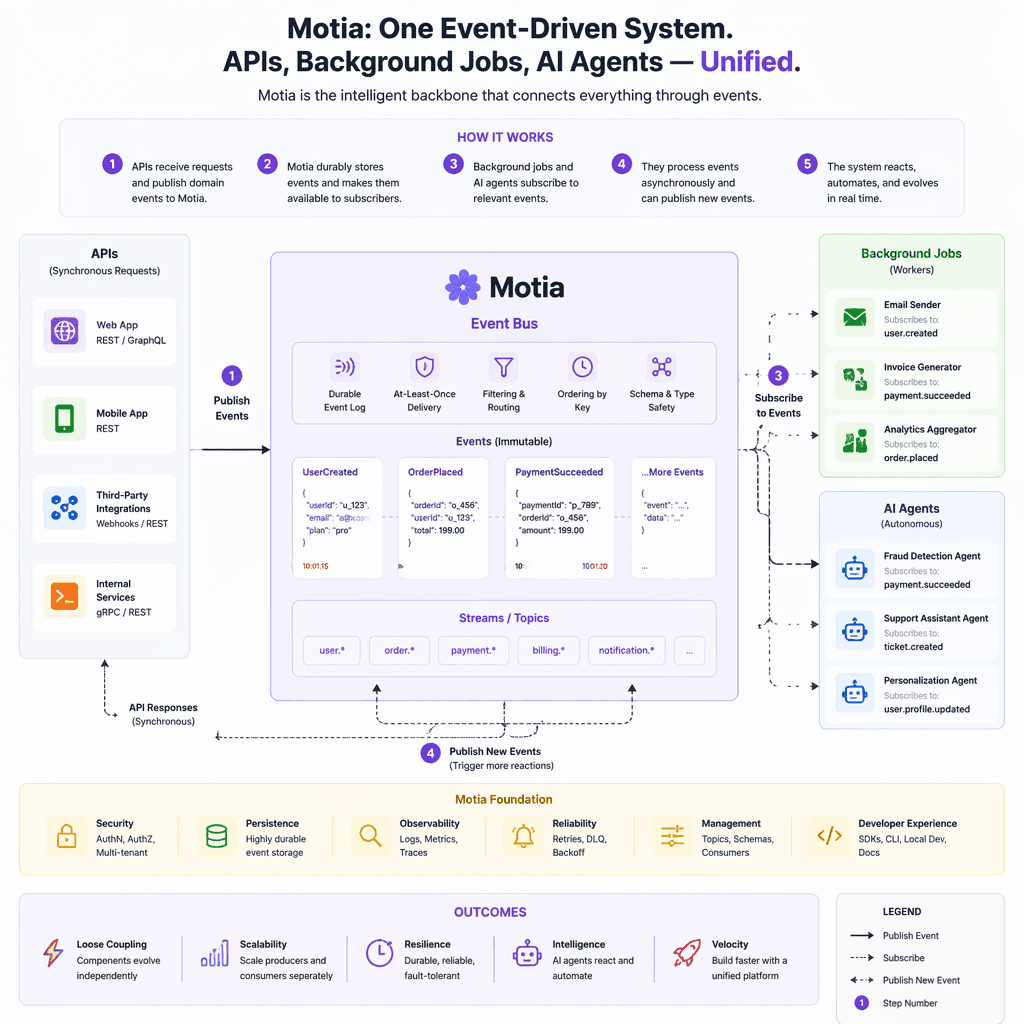

Motia, a backend framework, has gained traction for its ability to unify APIs, background jobs, events, and AI agents within a cohesive event-driven system. Supporting JavaScript, TypeScript, and Python, it offers built-in state management, observability, and one-click deployments. This framework simplifies the integration of AI agents into broader software ecosystems, making it easier for developers to manage workflows and scale applications.

In the Python ecosystem, an open-source RAG framework has emerged to facilitate conversational querying of SQL databases. By integrating large language models (LLMs) with RAG techniques, this framework supports accurate Text-to-SQL generation and provides hands-on examples for production-ready applications. Developers can leverage this tool to build scalable systems that combine semantic search with actionable insights.

Context Engineering: A Critical Lever for Agent Reliability

Context engineering is increasingly recognized as a cornerstone of effective AI agent design. This practice involves managing the agent’s working memory by organizing and pruning context dynamically. Techniques include externalizing context for reuse, maintaining scratchpads, embedding long-term memories, and compressing past interactions. These strategies mitigate issues like context window limits and hallucinations, ensuring agents perform reliably in complex workflows.

Key Takeaways

- Externalizing context enables reuse across sessions.

- Dynamic embedding search selects relevant context segments.

- Summarization prevents memory bloat in long-term interactions.

- Multi-agent environments isolate context for scalability.

Context engineering is the AI agent equivalent of feature engineering, transforming informal 'vibe coding' into systematic workflows.

Debugging and Development Productivity Tools

Debugging AI agents remains a significant challenge, but tools like NeatLogs are addressing this gap. NeatLogs provides comprehensive tracing of agent thoughts, tool usage, and responses, enabling collaborative feedback management directly on trace logs. This platform automates task creation from identified issues and consolidates debugging artifacts, reducing ambiguity in agent behaviors.

Sketchflow.ai offers another productivity boost by enabling rapid prototyping of interactive AI-driven UIs. With real-time collaboration features, developers can streamline design-to-development handoffs, accelerating the creation of user-friendly AI applications.

Advances in Retrieval-Augmented Generation and Semantic Search

RAG systems are evolving beyond textual retrieval to action-oriented retrieval, where queries can trigger API calls or initiate workflows autonomously. Innovations like ColBERT enhance precision retrieval at scale by combining contextual embeddings with late interaction mechanisms. Developers are increasingly integrating semantic search tools like Pinecone with deployment frameworks to build efficient AI applications.

Builder note

When adopting RAG frameworks, prioritize tools that support dynamic context management and scalable retrieval mechanisms. Ensure compatibility with your existing deployment stack to minimize integration overhead.

Source Card

AI Agent Frameworks and Development UpdatesThis source provides insights into the latest advancements in AI agent frameworks, emphasizing tools and practices for improving reliability, scalability, and context engineering.

SingleApi

| Signal | Why it matters |

|---|---|

| Motia framework | Simplifies backend integration for scalable workflows. |

| RAG for SQL | Enables accurate Text-to-SQL generation with LLMs. |

| NeatLogs | Improves debugging and traceability for AI agents. |

| Context engineering | Enhances agent reliability and memory management. |

- Adopt frameworks with built-in observability and state management.

- Leverage RAG techniques for actionable insights in data-heavy applications.

- Implement systematic context engineering to mitigate hallucinations.

- Use debugging tools like NeatLogs to streamline issue resolution.

- Motia: Event-driven backend framework for unified workflows.

- RAG frameworks: Conversational querying and semantic search integration.

- NeatLogs: Debugging platform for tracing agent behaviors.

- Sketchflow.ai: Rapid prototyping for AI-driven UIs.

- AI Agent Frameworks and Development Updates - SingleApi: https://www.singleapi.net/2025/07/16/ai-agent-frameworks-and-development-updates

Builder implications

For teams evaluating Advancing AI Agent Frameworks: Engineering Updates and Best Practices, the useful question is not whether the announcement sounds important. The useful question is whether it changes how an agent system is built, tested, operated, or bought. The source from singleapi.net gives builders a concrete signal to inspect: AI Agent Frameworks and Development Updates - SingleApi. That signal should be mapped against the parts of an agent stack that usually become fragile first, including tool contracts, long-running state, evaluation coverage, cost visibility, failure recovery, and the handoff between prototype code and production operations.

Production lens

Treat this as a systems decision, not a headline decision. A builder should ask how the change affects the agent loop, what needs to be measured, which failure modes become easier to catch, and whether the team can explain the behavior to a customer or operator when something goes wrong. If the answer is vague, the technology may still be useful, but it is not yet a production advantage.

Adoption checklist

- Identify the workflow where AI agents, context engineering, RAG frameworks, debugging tools already creates measurable pain, such as slow triage, brittle handoffs, unclear ownership, or poor observability.

- Write down the current baseline before changing the stack: latency, cost per run, recovery rate, review time, and the percentage of tasks that need human correction.

- Prototype against a real internal workflow instead of a demo task. The workflow should include imperfect inputs, missing context, tool failures, and at least one approval step.

- Add traces, event logs, and evaluation checkpoints before expanding usage. A new framework or model is hard to judge when the team cannot see where the agent made its decision.

- Keep rollback boring. The first version should let an operator pause automation, inspect the last decision, and return control to a human without losing state.

- Review the source again after testing. The source-backed claim should line up with observed behavior in your own environment, not just with launch copy or release notes.

| Area | Question | Practical test |

|---|---|---|

| Reliability | Does the agent fail in a way operators can understand? | Run the same task with missing data, stale data, and a tool timeout. |

| Observability | Can the team reconstruct why a decision happened? | Inspect traces for inputs, tool calls, model outputs, approvals, and final state. |

| Cost | Does value scale faster than usage cost? | Compare cost per successful task against the old human or scripted workflow. |

| Governance | Can sensitive actions be reviewed or blocked? | Require approval on high-impact actions and log who approved the step. |

What to watch next

The next signal to watch is whether builders start publishing implementation notes, migration stories, benchmarks, or reliability reports around this source. That secondary evidence matters because agent infrastructure often looks clean at release time and only shows its real shape once teams connect it to messy business workflows. Strong follow-on evidence would include reproducible examples, clear limits, documented failure recovery, and customer stories that describe what changed in the operating model.

Key Takeaways

- Do not treat a release as automatically production-ready because it comes from a strong source.

- Use the source as a reason to test a specific workflow, not as a reason to rewrite the entire stack.

- The best early signal is not novelty. It is whether the system becomes easier to observe, recover, and improve.