Moltbot Shows the Agent Interface Is Moving Into Chat, but the Security Model Is Not Ready

Moltbot's viral run points to a practical agent pattern: local execution, chat-based control, persistent context, and dangerous levels of device access.

Agent Mag Read is the searchable archive for AI agent articles, engineering analysis, research coverage, and source-backed reporting for builders shipping agent systems.

Agent Mag's weekly briefing on AI agents, covering new models, frameworks, production patterns, and the builders shaping the category.

Free. Delivered every Monday. No spam.

Moltbot's viral run points to a practical agent pattern: local execution, chat-based control, persistent context, and dangerous levels of device access.

The latest agent framework discussion points to a practical shift for builders: choose infrastructure by state, observability, human control, and lock-in risk, not by demo speed.

The most-starred agent repositories show a market moving from single demos toward visual builders, memory, browser control, local runtime options, and operational glue.

Microsoft Build 2025 is a signal that agent infrastructure is moving from demos toward managed identity, protocol interoperability, model routing, and production telemetry.

A new model wave, rising agent adoption, and power constraints are forcing builders to treat model choice, orchestration, safety, and infrastructure cost as one design surface.

Perplexity Computer's tax-prep signal is less about taxes and more about the infrastructure agents need before they can safely touch regulated workflows.

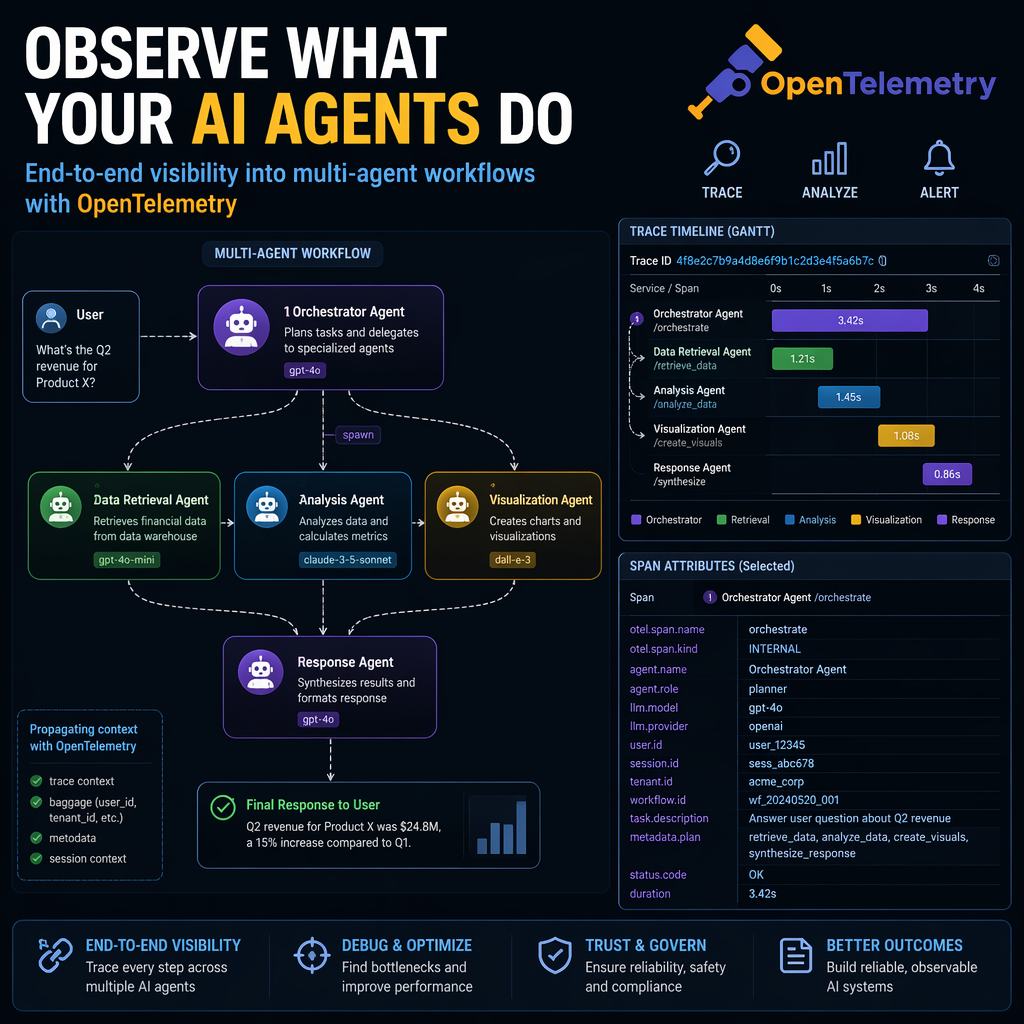

Production agent teams need observability, but traces only become useful when they are tied to explicit evaluation contracts, cost limits, and release decisions.

Agent builders should treat wide area networking as part of the agent runtime, because tool use, retrieval, distributed inference, and audit trails can turn latency and bandwidth into product constraints.

Red Hat Summit's agentic AI signal points to a bigger builder shift: teams need governed inference, agent lifecycle controls, and operator-ready automation before agents can move beyond demos.

Anthropic's Research system shows why parallel agents can outperform a single agent on open-ended search, but only when the task is valuable, separable, and worth the token burn.

Production AI agents need traces, evaluations, cost accounting, and feedback loops wired into release decisions before reliability becomes guesswork.

McKinsey's infrastructure signal points to a builder reality: scalable agents need governed actions, reliable operational context, and cost controls before they need more demos.

The latest agent news points to a builder shift: agents are becoming transaction systems, office operators, and cost-sensitive infrastructure rather than chat demos.

Moltbook is less important as a viral spectacle than as a messy preview of what breaks when autonomous agents get identity, memory, public channels, and weak security.

Agent builders should choose frameworks by state control, tool contracts, observability, and exit options, not by benchmark tables alone.

A new research survey frames agents as perception, reasoning, memory, and action systems, but the builder takeaway is sharper: architecture choices only matter if your evaluation catches cost, reliability, and tool failure.

The newest observability debate is not which trace viewer looks best, it is whether your agent stack can detect, evaluate, and stop bad decisions before they become customer-facing failures.

IBM's new observability signal points to a larger shift for agent builders: production AI systems now need live topology, output evaluation, cost baselines, and decision traces, not just model latency charts.

Grafana's new AI observability push signals a broader builder shift: production agents now need session traces, output evaluation, risk alerts, and controlled loops back into engineering workflows.

AWS is turning agent support from scattered examples into managed infrastructure, which gives builders a cleaner path to production but also raises new questions about trust, lock-in, and runtime control.

Capgemini's agentic AI survey shows enterprise demand is rising fast, but the real builder constraint is not model access, it is autonomy control, data readiness, observability, and trust.

Anthropic's MCP code execution pattern points to a bigger infrastructure shift: stop stuffing every tool into the prompt, and let agents discover, call, filter, and pass data through a controlled runtime.

A Chrome DevTools MCP server gives coding agents direct browser inspection and control, which could make web debugging more reliable if teams treat it as privileged infrastructure, not a toy connector.

Enterprise agent projects are hitting a scaling wall because the hard part is no longer prompting or model choice, it is delivering governed business context at the moment an agent acts.

The latest wave of AI agent education is moving from generic productivity to relationship building, which forces builders to design for identity, consent, timing, and measurable trust.

IBM's Think 2026 announcements are less interesting as product news than as a signal that enterprise agent builders now need orchestration, data context, operations, and sovereignty designed as one runtime system.

The AI infrastructure boom only makes sense if agents move beyond chat and start consuming compute through long-running, tool-heavy work.

A new public sector AI infrastructure report signals a broader shift for agent builders: production value now depends less on prompt tricks and more on data access, hybrid deployment, cost control, and operational trust.

Gartner's infrastructure prediction is less about chatbots and more about the control plane builders now need for autonomous, auditable operations.

Databricks' latest AI agent signal points to a builder reality: production success is shifting from better prompts to evaluation, governance, data access, and multi-agent operating discipline.

Veo 3.1 and Claude Haiku 4.5 point to a practical shift for agent builders: cheaper specialist models, stronger media actions, and more pressure to route, evaluate, and govern every task.

As agents move from demos to production, the hard work shifts from prompting and tool calls to orchestration, permissions, audit trails, and failure containment.

IDC's 2026 FutureScape puts numbers on a shift agent builders already feel: pilots are giving way to governed, measurable, multi-agent systems that must survive real operations.

EY's infrastructure signal points to a practical builder opportunity: agents that reconcile siloed project, asset, risk, and cost systems without pretending the silos will disappear.

A new enterprise survey signals that agent builders now need to solve integration, data quality, evaluation, and organizational rollout, not just prompt quality.

OpenAI's Codex safety post and SemiAnalysis' InferenceX benchmark show why agent teams need to treat runtime controls and inference cost as product constraints.

Microsoft's Azure AI Foundry introduces new OpenTelemetry semantic conventions for multi-agent systems, enabling unified observability across frameworks.

A deep dive into the most comprehensive list of AI agent frameworks and tools curated for 2026, featuring 340+ resources across 20 categories.

Emerging semantic conventions and improved tooling are reshaping AI agent observability, enabling better monitoring, debugging, and optimization for scalable AI-powered applications.

Microsoft's Agent Framework now integrates with GitHub Copilot SDK, enabling engineers to build robust AI agents with multi-agent workflows and ecosystem support.